This site uses cookies. By continuing to browse the site you are agreeing to our use of cookies. Read our privacy policy>

![]()

This site uses cookies. By continuing to browse the site you are agreeing to our use of cookies. Read our privacy policy>

![]()

Enterprise products, solutions & services

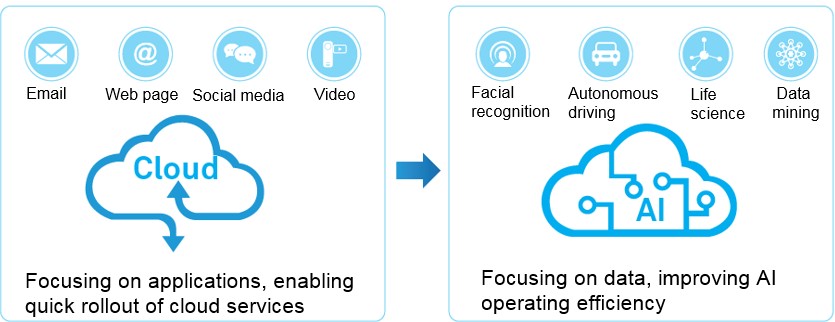

Over the past decade, data center services have shifted from predominantly web-centric to cloud-centric. Today, they are shifting once again, this time from the era of cloud computing and into the intelligent era.

With massive amounts of data generated during digitalization, filtering out and automatically reorganizing useful information — then mining the valuable data using Artificial Intelligence (AI) — is arguably the key to the intelligent era. According to the Huawei Global Industry Vision (GIV), 97% of large enterprises will use AI by 2025. Indeed, more and more enterprises regard AI as the primary strategy for digital transformation. The ability to leverage AI — in decision making, for reshaping business models and ecosystems, and rebuilding positive customer experiences — will be the key to driving successful digital transformation.

A large volume of data is generated during digitalization. According to Huawei GIV, the amount of global data produced annually will reach 180 ZB in 2025, and the proportion of unstructured data (such as raw voice, video, and image data) will also continue to increase, reaching more than 95% in the near future. With manual big data analysis and processing methods unable to handle such large volumes of data, deep learning AI algorithms based on machine arithmetic can be used to filter out invalid data and automatically reorganize useful information, thereby providing more efficient decision-making suggestions and smarter behavioral guidance. In the intelligent era, the mission of enterprise data centers is evolving from focusing on quick service provisioning to focusing on efficient data processing.

As AI continues to advance, deep learning server clusters emerge and high-performance storage media, such as Solid-State Drives (SSDs), have been developed, placing higher requirements (μs-level) on communication latency. For example, in a performance-sensitive High-Frequency Trading (HFT) environment in the financial industry, low latency is key to processing huge trading volumes. The fastest transaction speed of an order is approximately 100 microseconds in the National Association of Securities Dealers Automated Quotations (NASDAQ). Communication latency is the primary factor that needs to be considered in data center network construction, which must be reduced in two ways:

1. A server’s internal communication protocol stack needs to be changed. In AI data computing and SSD distributed storage systems, data processing using the traditional TCP/IP protocol stack has a latency of tens of microseconds. Therefore, it has become industry practice to replace TCP/IP with Remote Direct Memory Access (RDMA). Compared with TCP/IP, RDMA can improve computing efficiency six- to eight-fold; and the 1 μs transmission latency of servers makes it possible to reduce the latency of SSD distributed storage systems from milliseconds to microseconds. As a result, in the latest Non-Volatile Memory Express (NVMe) interface protocol, RDMA has become a mainstream default network communication protocol stack.

2. To reduce latency involved in optical fiber transmission, data centers need to be deployed near the physical locations of latency-sensitive applications. As a result, distributed data centers have become the norm. Data Communication Network (DCN) and Data Center Interconnect (DCI) solutions are increasingly concerned with quickly and gradually increasing DCN/DCI bandwidth, to ensure zero packet loss, low latency, and high throughput of lossless networks, meeting the requirements of rapid service development. Moore's Law supports the increase of data center bandwidth, and the capacity of a single DCN interface for DCI will exceed 100G. The DCI network connecting data centers has evolved to a 10 Tbit/s Wavelength Division Multiplexing (WDM) interconnection network.

Summary: AI-oriented data operation requires a lossless network with zero packet loss, low latency, and high throughput. As a result, internal communication protocols on servers need to be changed and DCI is required.

High-performance services, such as AI and High-Performance Computing (HPC), are increasingly dependent on networks. A lossless network’s congestion control algorithm requires collaboration between network adapters and networks themselves. As such, from the beginning of network design, it is necessary to quickly and accurately learn the real-time status of network-wide devices and links during Operations and Maintenance (O&M) to support stable service operation and expansion. Optical fiber transmission systems with multi-wavelength multiplexing are widely used in DCI. The service provisioning and maintenance modes of optical systems differ from those of digital networks, and operators usually have large teams of skilled personnel ensuring optical network maintenance. Conversely, in the Internet Service Provider (ISP) and finance industries, the required experience and skills of IT personnel who construct and maintain data center networks are much lower. Rapid service provisioning and accurate troubleshooting are key challenges for such industries. With the massive growth of data center construction, DCI requirements increase on a large scale. This has become one of the key bottlenecks in data center development.

1. The introduction of automatic planning, automatic configuration, and intelligent alarm analysis systems helps to simplify DCI system O&M.

As cloud services are quickly developed and rolled out, network reconstruction and expansion has become more frequent. Traditional WDM device installation, fiber connection, configuration, and commissioning require professional planning and configuration. The automatic planning and configuration system frees O&M personnel from complex and professional site deployment, ensures automatic and efficient deployment, and supports quick service cloudification as well as frequent capacity expansion. Compared with manual configuration, automatic configuration greatly improves rollout efficiency and configuration accuracy. To illustrate, the probability of errors in traditional manual fiber connections can often reach 5%, and services become unavailable when fiber is connected incorrectly. Moreover, troubleshooting, cross-checking, and verification are time-consuming and labor-intensive tasks.

2. Intelligent O&M systems replace traditional network management, implementing proactive O&M for data centers.

More and more applications are being run on the cloud, and data centers, as key infrastructures for digitalization, are therefore extremely important. Any fault that occurs in DCI often has a severe impact. DCI introduces efficient and intelligent O&M, transforming — optimizing —O&M from manual to automatic, from passive to active. Compared with traditional network monitoring systems, intelligent O&M systems use built-in optical sensors to implement optical network global visualization (including optical fibers and optical transmission devices). In addition, intelligent O&M systems provide warnings about changes in an optical network’s health, especially physical parameters such as optical power attenuation and optical wavelength drift; they automatically analyze and filter alarms as well as automatically determine the root causes of faults based on the experience library. These features ensure the network failure rate is reduced and network availability is greatly improved.

Summary: Data center network O&M urgently needs automatic configuration and maintenance tools to adjust configurations in real time, quickly locate faults, and simplify network O&M of lossless networks, thereby supporting rapid development of data center services in the cloud era.