Produits, solutions et services pour les entreprises

Data centers play a vital role in providing the technical support required for AI innovation and AI, in turn, will bring considerable benefits to data centers. For example, telemetry technology helps to report abnormalities to a centralized and intelligent O&M platform for the purpose of big data analytics. This greatly improves the efficiency of network operations and simplifies O&M, with lower labor costs.

However, as computing and storage converge, data center server clusters are expanding in size. A 1,000-fold increase in analytical traffic, and increasingly rapid information transfer, as well as information redundancy, means that the scale of intelligent O&M platforms is also rapidly increasing.

The heavy performance burden on intelligent O&M platforms compromises their processing efficiency. To reduce the pressure on intelligent O&M platforms, network edge devices need to have intelligent analysis and decision-making capabilities, which are key to improving O&M efficiency.

The accuracy of a model in the intelligent O&M platform changes depending on the data distribution, application environment, and hardware environment. Its accuracy will always fall within an expected range, which is required for the intelligent network analysis system.

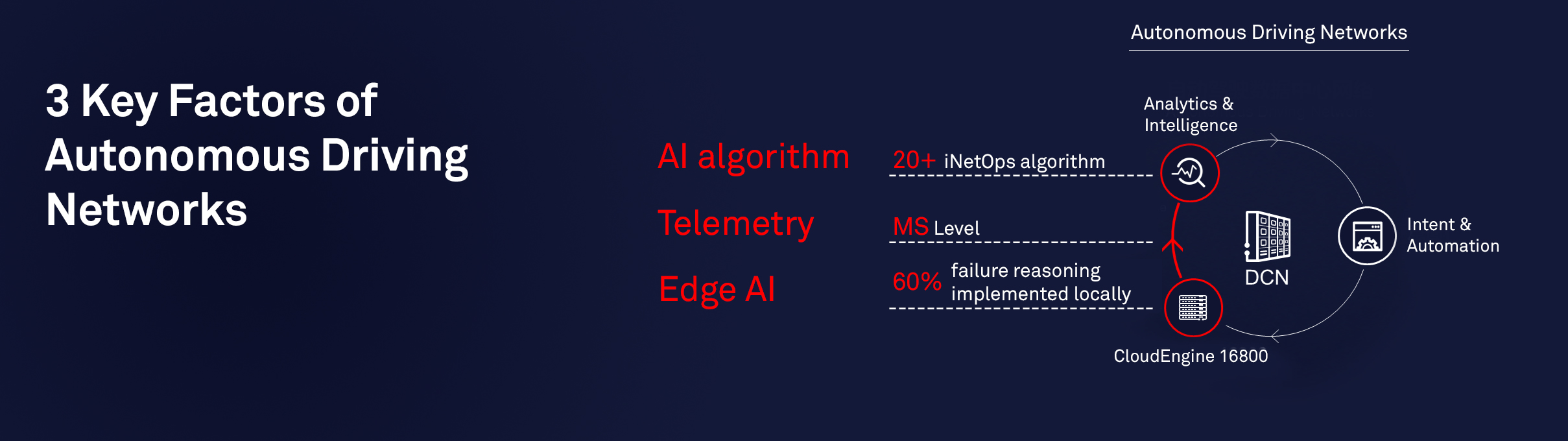

The CloudEngine 16800 is the industry’s first data center switch built for the AI era. It enhances the intelligence level of devices deployed at the network edge, and enables the switch to achieve local inference and real-time decision-making. Using telemetry, the data is first preprocessed locally and then sent to the intelligent O&M platform for secondary processing, based on a fault-case library. This distributed architecture enables forwarding devices to work with the intelligent O&M platform to implement intelligent network O&M.

The intelligent fault analysis is based on a professional experience library, as deep learning models require a large amount of data training for optimal implementation.

Due to insufficient training samples, AI is not applied on a large scale, despite algorithm breakthroughs. The combination of AI machine learning and a professional experience library can improve the intelligent O&M platform’s efficiency.

Based on the iNetOps intelligent O&M algorithm, the second-level root cause analysis of 72 typical faults is implemented, and the automatic fault locating rate reaches 90 percent.

The ultimate combination of CloudEngine 16800’s local intelligence and the centralized network analyzer, FabricInsight, leads to a distributed AI O&M architecture that significantly improves the flexibility and “deployability” of O&M systems, and greatly simplifies O&M to accelerate the advent of autonomous driving networks.

A fully connected, intelligent world is fast approaching, and data centers will become the core of new infrastructures such as 5G and AI.

The CloudEngine 16800 is the industry’s first AI switch to implement distributed O&M for data center networks based on the AI chip. The integration of Huawei data center networks and AI technology is undergoing local exploration and full application.

As AI technologies become increasingly mature, they will continue to be widely recognized and eventually win their own places.

The release of the CloudEngine 16800 incorporates AI and network technologies, and is a milestone event in the history of intergenerational switch development.

With the large-scale commercial use of the CloudEngine 16800, it will boast huge technical complementarity and spillover effects, leading to the golden development of AI applications and network efficiency improvement.