Produkte, Lösungen und Services für Unternehmen

Smartphones, PCs & Tablets, Wearables, mobiles Breitband und mehr

Über Huawei, Nachrichten, Veranstaltungen, Brancheneinblicke und mehr

This blog series on Network Attached Storage (NAS) looks under the hood of the latest tech in file services & storage and dives into how enterprises can skyrocket efficiency with NAS.

Network-attached storage (NAS) and storage area network (SAN) are the most common types of network storage architectures, and it’s natural to wonder what main advantages NAS has over SAN.

NAS allows you to share file data more efficiently, is more suitable for handling unstructured data, such as code files and images, and provides better access because it typically runs over a standard Ethernet.

Since SAN uses a high-speed Fiber Channel connection, it is typically faster than the TCP/IP connections used in NAS. Connection speed aside, NAS file systems save data in tree structures, which causes extra latency in retrieving files.

And though high-speed Ethernet can overcome issues related to network transmission, an urgent need exists for an accelerated file system layer for enterprises. Here, we explore this possibility and best practices to achieve a better, faster NAS file system.

Conventional NAS file systems run over an active-passive architecture, in which a specific controller or node, also known as the owner controller, processes all file workloads in a file system. When you prepare services to run on a NAS system, you must first plan which controller owns which file system so you can balance performance and workload over different controllers.

But because file systems are owned by specific controllers, a file system can only utilize the resources of its owner controller, resulting in limited performance. For performance-intensive applications, this can be a weak point in an environment.

This architecture clearly does not support a global namespace, and therefore when you have multiple file systems, they usually carry different service loads, making it difficult to balance the global loads.

Fortunately, there’s a way to overcome these challenges.

NAS systems that run on Huawei flash storage use distributed file systems, which can be globally shared by all controllers in a cluster.

“Distributed” refers to both the front and back ends of a storage system. In the front end, an authorized client can access all of the files in the cluster from any controller, which simplifies the network.

In the back end, file systems are no longer owned by any controller. Instead, a balancing algorithm evenly distributes directories and files in each file system to all controllers in the cluster, which is how the Huawei-exclusive cross-controller load balancing feature works. This allows a single file system to fully utilize the controllers of the entire storage cluster and ensure optimal performance and capacity.

Distributed file systems free you from having to meticulously plan each owner controller. Instead, you can deploy a single file system or multiple file systems whenever or wherever you want, and the NAS system will automatically allocate the file workloads for you.

Directories and files in a file system are carried by file service partitions (FSPs), which are logical units on the CPUs. Each CPU on the storage controllers has the same number of FSPs, and the major issue is how to distribute directories and files to the FSPs.

The Huawei NAS solution can control how load distribution is handled – either by load balance or performance – and can be selected on demand to suit your needs.

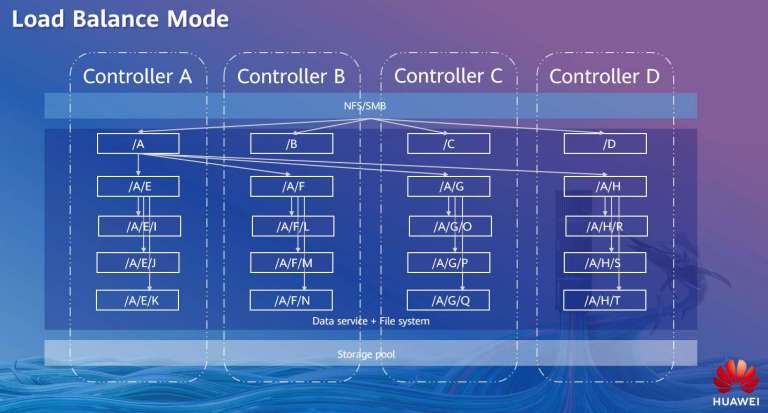

In load balance mode, the storage system provides a better outcome of global load balancing, in which the first two levels of directories of a file system are evenly distributed to every FSP on all CPUs. This allows every controller to carry similar workloads.

Third or deeper levels of directories and files are carried by the same CPUs of their parent directories. When a client needs a file from deep in the trees of directories, the storage system doesn’t waste time forwarding the request among controllers and CPUs at the third or deeper levels, and in doing so, slashes file access latency.

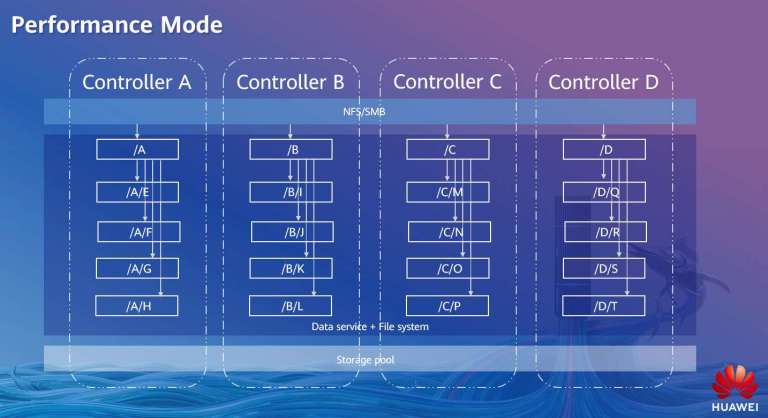

As you’d expect, performance mode aims to get the greatest performance out of the storage system. In contrast to load balance mode, which scatters the first two levels of directories, performance mode places the first directory and all sub-directories and files on one controller, which solely processes the access requests from a client. This avoids forwarding across controllers, which in turn helps slash latency.

The controller allocates newly-created directories and files to all of its CPUs and FSPs in a round-robin setup, thus ensuring a load balancing design within that controller.

In most cases, load balance mode is suitable for NAS use cases, as it delivers both excellent balancing and latency for global loads. Performance mode allows for high speeds when performance needs to be prioritized. Huawei’s management software can help you apply best practices to your configuration.

Hopefully you’ve found this post on NAS basic useful. Stay tuned for more articles in this series, as we pull the curtain back on the secrets behind NAS storage.

Disclaimer: The views and opinions expressed in this article are those of the author and do not necessarily reflect the official policy, position, products, and technologies of Huawei Technologies Co., Ltd. If you need to learn more about the products and technologies of Huawei Technologies Co., Ltd., please visit our website at e.huawei.com or contact us.