Enterprise products, solutions & services

This blog series on Network Attached Storage (NAS) looks under the hood of the latest tech in file services & storage and dives into how enterprises can skyrocket efficiency with NAS.

In a world full of unforeseen circumstances, a successful continuity strategy is likely centered on avoiding downtime, specifically by using backups. Consider how we often sit safe in the knowledge that a spare tire, a standby phone charger, or a second mobile will get us through the day. You may also realize such plan Bs are indeed necessary but are more often than not wasted if the emergency passes.

The storage industry is the same. Diversified data types have popularized network-attached storage (NAS). A second storage device is used to improve both NAS storage reliability and service guarantee, which is why NAS on a dual-system architecture has become a mainstream choice.

However, does each dual-system architecture really resolve common pain points?

Nowadays, the above system can be deployed in active-passive (AP) or active-active (AA) mode. Most vendors provide only the AP mode for NAS, whereby only one array provides services and the other just serves as a backup. This is suitable for certain scenarios, but in most cases, it just leaves an AA-like impression and to a certain degree actually wastes hardware capabilities, showing clear limitations in load balancing, consistency, and reliability.

To date, Huawei is the only vendor to provide the AA mode for NAS. Let’s look at its NAS HyperMetro solution in more detail.

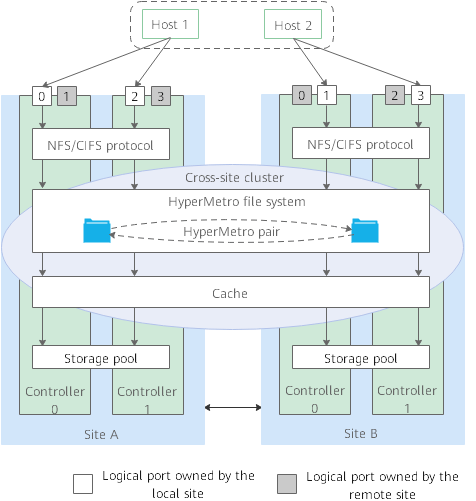

Huawei NAS HyperMetro is a unique offering as it provides both AP and AA modes for two storage arrays in a cross-site cluster. In the AA setup, you can create a NAS HyperMetro pair or a NAS HyperMetro file system to provide active-active data access to hosts at both sites, and these hosts access the file system via logical ports of both arrays. Each array has logical ports owned by either the local or remote array, which allows both arrays to be accessed from any host. In this solution, directories and files of the HyperMetro file system are distributed to different owning controllers. So, assuming a host locally reads data owned by a remote controller, the request is finally processed by the owning controller at the remote array. When a controller writes data to the storage pool, so does the same controller at the other array. This ensures both arrays stay active regardless of when data is read or written, improving hardware utilization and achieving global load balancing.

You may want to ask: How are two active arrays reliably consistent in real time?

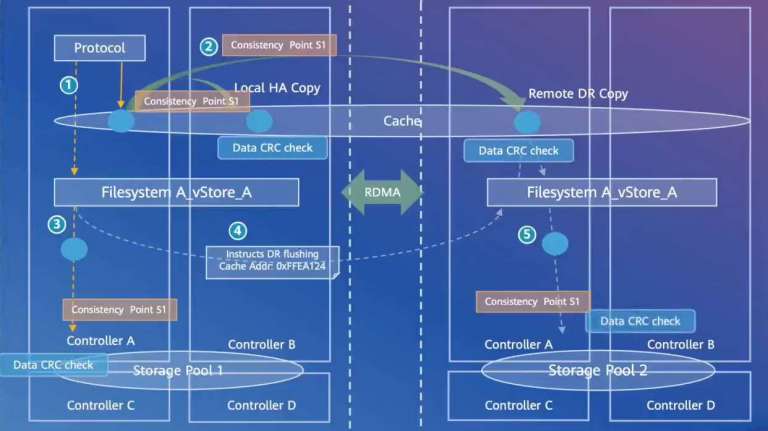

Service data aside, consistency is achieved early as you create the cluster, because basic information, such as permissions, is synchronized to both arrays at that time. The joy of this solution is that it prevents repeating configurations.

When a host writes data to the cache of a controller in real time, the data is mirrored to another controller at the local array, and also to the same controller at the remote array. This means three copies of the same data are available. Yet, the solution goes further. Each time data is mirrored, there is a consistency point recorded. In other processes, for example, writing data to the storage pool, consistency points help locate the desired data. Further verification is then used for higher reliability. Each process, including mirroring, reading, and writing, undergoes through a cyclic redundancy check (CRC), which is used to find out if any error occurs during transmission, and keep data fully consistent.

Last but not least, I guess you may still be wondering how the balanced architecture and a set of data-related techs can guarantee always online services. The answer lies in the arbitration design of NAS HyperMetro.

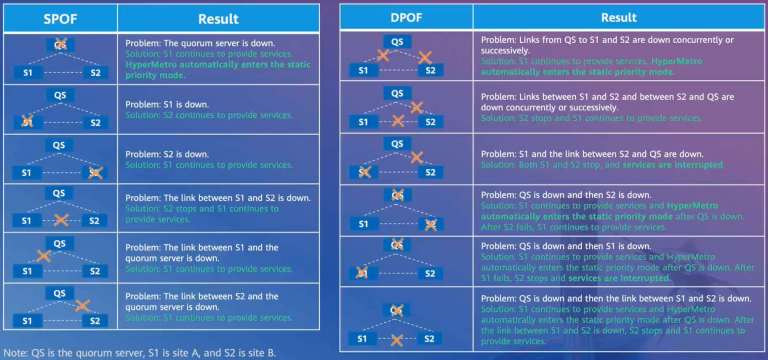

A quorum server is critical in the whole solution. If a fault occurs, the quorum server determines which array continues to work via arbitration, ensuring that single points of failure will have zero influence on any scenario, and services are never interrupted, as shown in the graphic below. Even with dual points of failure, services still stay up in most cases.

Take the single failure of S1 as an example. After S2 finds S1 abnormal, S2 applies for arbitration from the quorum server. After S2 is granted the service takeover permission, it re-obtains necessary data from the hosts or directly recovers such data via the mirrored cache. When done, services are switched to S2 without downtime. S2 then records data changes after the switchover, with which services can be automatically switched back to S1 after fault kick-out.

With ever-reliable processing throughout the whole service cycle, Huawei NAS HyperMetro avoids redundant and urgent manual actions, freeing you from worry about service stability when your NAS storage system is not active enough.

This blog series aims to bring you new insights into some of Huawei’s brightest tech and help fuel your knowledge of NAS storage. If you found this blog on NAS HyperMetro useful for your future business planning, remember to stay tuned for more posts.

Disclaimer: The views and opinions expressed in this article are those of the author and do not necessarily reflect the official policy, position, products, and technologies of Huawei Technologies Co., Ltd. If you need to learn more about the products and technologies of Huawei Technologies Co., Ltd., please visit our website at e.huawei.com or contact us.